(from the Analystprep.com course on quantitative analysis)

I think about this a lot.

Thirty-five or so years ago, I was reading one of the annual editions of the Bill James Baseball Abstracts. Bill James was one of the first (and best) statisticians working in baseball. For instance, he analyzed the ways that the specifics of ballparks worked for and against the pitchers on their teams—for example, the Oakland Coliseum had a huge foul territory that was still in play, not in the seats. He calculated the number of free outs a pitcher would get over a set number number of innings from popups that infielders could catch that would have been in the stands elsewhere. Things like that. Just a really smart, obsessive, geeky guy. And a funny and opinionated writer, too.

Anyway, I remember one excerpt of an essay—I’ll butcher it in paraphrase—in which he talked about guys in the stands at ballgames watching a shortstop misplay a ball, and saying “Man, I coulda had that.” James, in response, wrote this wonderfully scathing little piece about how nobody in the stands at a baseball game has any real way of knowing just how good those guys are on the field. And he used statistical analysis to explain it.

There were 26 teams in the major leagues in the 1980s, and each team had a season-long roster of 25 players. That’s a total of 650 major league baseball players, out of (at that time) 80 million adult American men, plus another 50 million or so throughout Latin America. So 650 players out of a hundred thirty million in the eligible pool means that those guys—even a season-long benchwarmer with the woeful Pittsburgh Pirates (who won 57 games and lost 104)—were among the 0.0005% best baseball players in the eligible community. That is to say, there’s one major leaguer among every two hundred thousand of us. You can be awfully, awfully good… and yet not nearly good enough. One in a million is nearly literal.

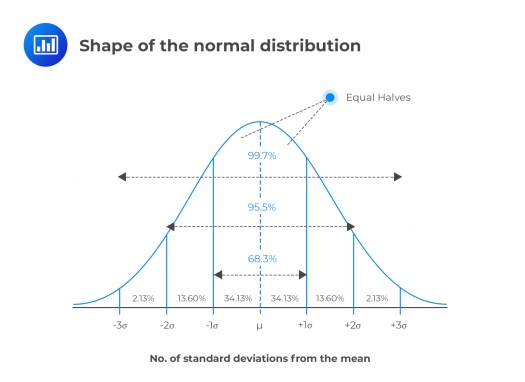

The shape of a normal population distribution takes the form of a Gaussian curve with the median value at the center and symmetrical fall-off to both sides. One’s position within that distribution can be described by Z-score, which is simply the number of standard deviations we might be below or above the norm. Major league baseball players represent those men who are at Z>5, five standard deviations above the norm, a community of stunning outliers.

Baseball is maybe more objective than writing, but still, as writers, we’re working to enter a community of stunning outliers. It’s estimated that about 50,000 novels are commercially published in the US each year. Literary agent Miriam Altschuler claims that 70% of those sell 2,000 copies or fewer, which means you’ll have never heard of them (and she’ll be broke if she tries to represent them). So all of us writers are applying to enter a tiny community, most of whose seats are already held by tenure. You’re not going to displace Margaret Atwood or Stephen King on any publisher’s roster. (I hope that’s not news to you…)

When we’re sitting in the stands, reading some shabby novel, we say things like, “I could do that.” But really… could we? How would we know? Who would tell us our Z-score?

Let’s think of it as a series of filters.

- We start with the 200 million American adults, and knock it down to the ten percent who read the most fiction. That’s twenty million.

- Now let’s take the ten percent of that group who imagine that we also could write professional-quality fiction. That’s two million.

- Now let’s take the ten percent of THAT group who actually have the time and the commitment to produce a full-length manuscript. That’s two hundred thousand.

- Ten percent of that is 20,000, which is way more than the number of available publishable slots after you subtract all the Atwoods and Kings and Grishams and Oateses. Probably another ten percent reduction to two thousand is closer to it for all of us wannabe “debut authors.”

The Z-score for a new novelist is almost as substantial as for a major league baseball player. And yet, we write.

More tomorrow.

One Reply to “”

Comments are closed.